Based on my years of personal experience and usage, my advice for selecting a monitor depends on your specific needs:

- Professional Gaming: If you’re a professional gamer, I recommend choosing a monitor with a resolution of 1080P or 2K and 100% sRGB color coverage, with a refresh rate of either 144Hz or 165Hz. These specifications will provide you with the immersive experience you need for gaming.

- Professional Design Work: However, if you’re a professional designer, I don’t recommend opting for a high refresh rate monitor. This is because achieving both high refresh rates and excellent color accuracy is quite challenging. Of course, unless you have a substantial budget, in which case you could consider configurations like the Sony BVM-X300 or higher. Otherwise, opting for a 60Hz monitor would suffice for your design tasks.

Ultimately, your choice should align with your specific usage requirements and budget constraints. Whether it’s for gaming or professional design work, selecting a monitor that meets your needs and preferences is key to enhancing your overall experience.

Table of Contents

Introduction to Display Specifications: Understanding the Basics

How to choose a monitor, what are the specifications of monitors, choosing the right monitor specifications will ensure the best viewing experience when using a monitor. Understanding the basic knowledge of each specification will help you choose a monitor that suits your needs, whether it’s for gaming, entertainment, or professional purposes.

So when purchasing a new monitor, the plethora of specifications may be overwhelming. This section will reveal the basic knowledge of monitor specifications and explain why they are closely related to your viewing experience.

Monitors come in various shapes and sizes, and their specifications will have different effects on your experience, whether you’re gaming, working, or browsing the web. In this article, we will break down the key elements: resolution, color depth, refresh rate, HDR, and variable refresh rate (VRR). Understanding this information will assist you in making your own assessments and choices when selecting a monitor in the future, enabling you to make informed decisions.

Resolution refers to the number of pixels that make up a display. The higher the resolution, the more pixels, resulting in clearer and more detailed images. Popular resolutions include Full HD (1920 x 1080), Quad HD (2560 x 1440), and 4K (3840 x 2160). Gamers may prefer higher resolutions for immersive experiences, while professionals may opt for even higher resolutions, such as 4K, to meet the demands of their daily design tasks.

Color depth refers to the number of colors a monitor can display. Measured in bits per channel, the standard color depth is 8 bits per channel, capable of displaying 16.7 million colors. Professional-grade monitors typically have 10-bit or even 12-bit color depth, capable of displaying over a billion colors, which is particularly helpful for accurate image rendering.

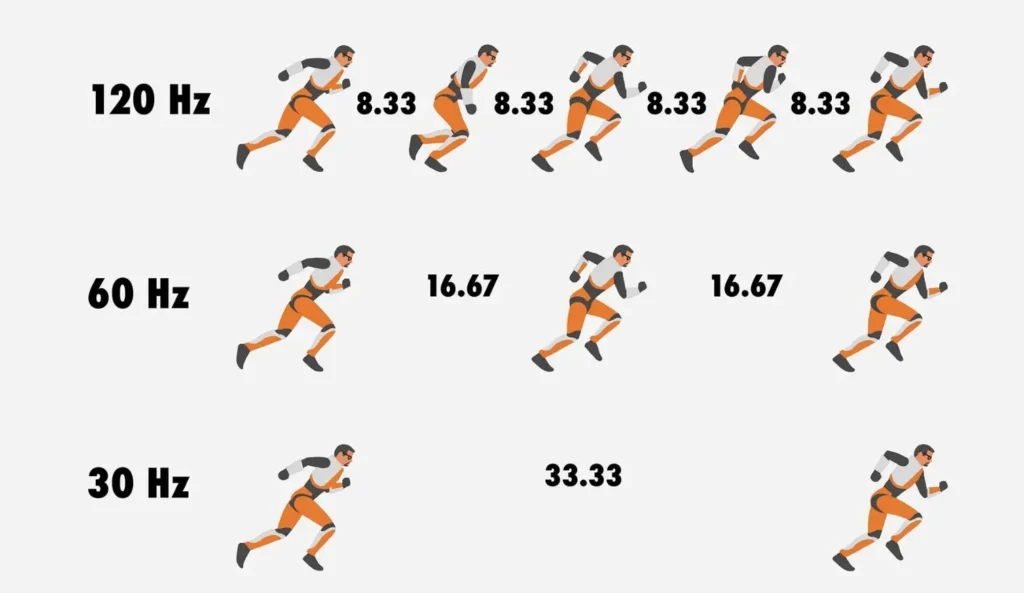

The refresh rate of a monitor indicates how many times the display updates new information per second. The higher the refresh rate (measured in Hertz), the smoother the picture. While 60 Hz is the standard refresh rate, sufficient for basic tasks, gamers typically prefer monitors with a refresh rate of at least 120 Hz or 144 Hz for a smoother gaming experience.

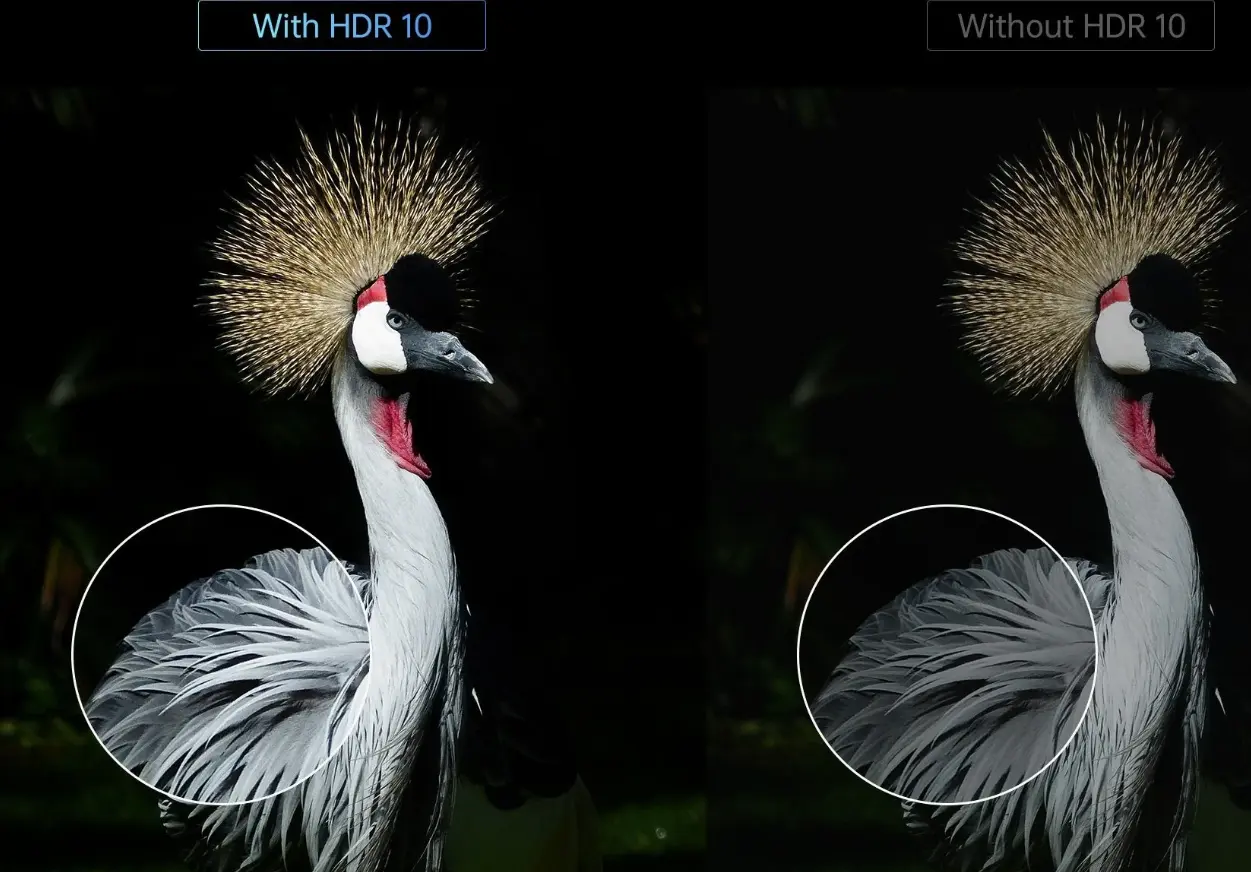

HDR stands for High Dynamic Range, a technology that enhances the contrast and color of the content being viewed, providing more vivid and realistic images. Not all monitors support HDR, and the quality can vary significantly, so understanding HDR standards such as HDR10 or Dolby Vision is crucial for understanding the expected performance of a monitor.

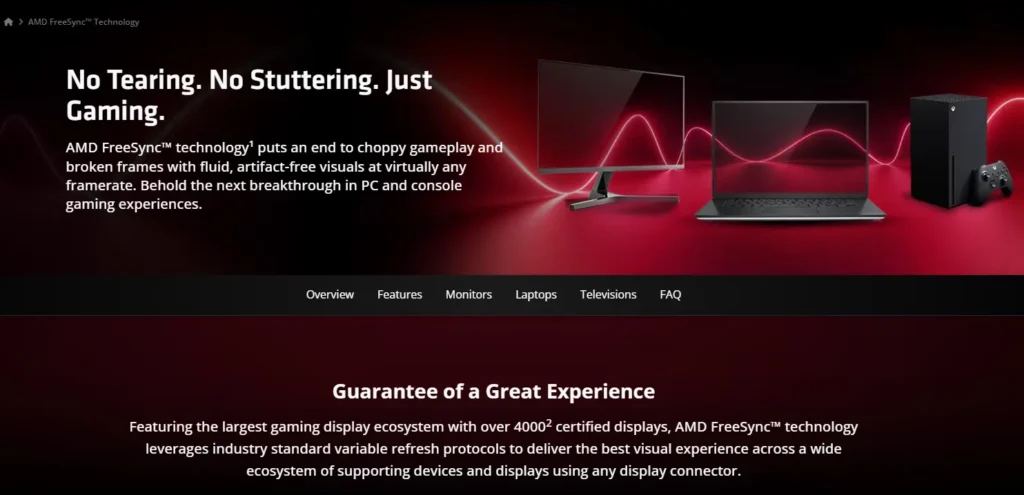

Lastly, Variable Refresh Rate (VRR) is crucial for gamers. VRR technologies such as NVIDIA’s G-SYNC or AMD’s FreeSync synchronize the monitor’s refresh rate with the output of the graphics card, reducing screen tearing and stuttering, resulting in a seamless gaming experience.

Exploring Screen Resolution and Gaming Performance: Summary and In-Depth Discussion

The relationship between screen resolution and gaming performance is crucial for determining the visual fidelity and smoothness of games. I will introduce the impact of resolution on performance and the trade-offs that gamers need to face in practical usage. Additionally, I will delve into the technical and subjective aspects of gaming at different resolutions based on current circumstances.

As professional gamers, we often find ourselves torn between pursuing the clearest visual effects and the smoothest performance. The monitor’s resolution plays a significant role in achieving these goals, so balancing the trade-offs between them is crucial.

Starting from the basics, screen resolution refers to the number of pixels that make up a display image. The higher the resolution, the denser the pixel density, resulting in clearer and more detailed images. Common resolutions include 1080p (Full HD), 1440p (Quad HD), and 2160p (Ultra HD or 4K).

However, higher resolutions require stronger processing power. As the resolution increases, the difference between high and low resolutions becomes truly noticeable during gaming. Each frame of the game requires rendering more pixels, which can severely affect frame rates unless you have powerful hardware capable of handling the additional workload. This is where the key trade-off lies: prioritizing resolution for a more realistic gaming experience or opting for lower resolution for higher and more stable frame rates.

For competitive gamers, performance may outweigh the need for higher resolution. Playing games at 1080p, the higher the frame rate, the smoother the motion and the faster the response, which are crucial factors for fast-paced games.

Conversely, if you prefer immersion and visual detail in single-player games, higher resolutions may be more desirable. For example, 4K resolution can provide a broader and more vivid environment, resulting in an engaging gaming experience.

Another important consideration is hardware cost. High-resolution gaming typically requires not only a suitable monitor but also powerful graphics cards and processors. Before making a decision, consider whether your budget is sufficient and reassess whether your current system needs to increase resolution based on your financial situation and actual needs. This is crucial.

Balancing screen resolution and gaming performance can be complex if we delve into the details, and there is no one-size-fits-all answer. It depends on personal preferences, the type of game, and the willingness to invest in hardware. Ultimately, our goal is simple: what kind of gaming experience do you desire the most?

In conclusion, achieving the perfect combination of resolution and performance is the pursuit of every enthusiast. With the development of display technology and the enhancement of hardware capabilities, these considerations are likely to evolve in the future. Stay informed about the latest developments to make wise decisions and enhance your gaming experience in the days to come.

Understanding Color Depth: Transitioning from Standard Dynamic Range (SDR) to High Dynamic Range (HDR)

Essentially, appreciating HDR media is like experiencing a visual feast, where the world depicted on the screen is as vivid and colorful as real life. With technological advancements, embracing HDR is no longer a choice but a necessity for those pursuing outstanding visual effects. If you wish to delve deeper into color depth or need advice on navigating between SDR and HDR, feel free to explore further.

The spectrum of color depth reveals the simplicity of SDR and the complexity of HDR. This section primarily discusses their subtle differences, aiming to unveil how advancements in HDR technology magnify the visual media experience.

In the realm of display technology, color depth is the cornerstone of image quality. Transitioning from Standard Dynamic Range (SDR) to High Dynamic Range (HDR) truly showcases the difference between them, leading to a profound appreciation of the importance of visual experience based on individual experiences.

Color depth, also known as bit depth, represents the amount of data used to capture color information in each pixel. SDR typically employs an 8-bit color depth, meaning each color channel (red, green, blue) has 256 shades, ultimately presenting approximately 16.7 million colors. Indeed, this color depth has been the standard for our visual digital media in recent years.

However, HDR completely transforms this color palette. By extending to 10 or even 12-bit color depth, HDR can display up to 1.07 billion or more colors. This remarkable enhancement not only increases the number of colors but also enhances image quality, resulting in more vibrant colors, smoother gradients, and overall more realistic imagery.

In addition to quantity, HDR also involves dynamic range—the contrast between the darkest and brightest parts of an image. SDR’s dynamic range is limited, often resulting in flat and unremarkable images, with details lost in shadows or highlights. HDR significantly expands this range, preserving intricate details regardless of brightness.

The extension of HDR color depth and dynamic range not only requires compatible displays but also necessitates consideration of HDR during content creation. Transmission mediums—whether streaming services, Blu-ray, or gaming consoles—must also support the bandwidth requirements of HDR.

Another advancement of HDR is its wider color gamut. While SDR primarily follows the Rec.709 color space, HDR adheres to Rec.2020 or DCI-P3, covering a broader spectrum of visible light. This means more accurate color reproduction, particularly for shades with higher saturation that SDR cannot display.

When considering the transition to HDR, it’s essential to balance content availability, display capabilities, and supporting devices. Compared to SDR, HDR offers richer colors and sharper contrast, undoubtedly a step forward. However, HDR’s advantages can only be fully realized when content creation, distribution, and display technologies are perfectly integrated.

Gamers’ Need for Speed: Understanding the Role of Refresh Rate

For gaming enthusiasts, refresh rate is crucial for creating a seamless and responsive gaming experience. This section briefly explains the key role of refresh rates and how they promote the pursuit of precision and smoothness in gaming.

In gaming, every millisecond counts. The refresh rate of a monitor, measured in Hertz (Hz), determines how many times the monitor refreshes new images per second. For gamers seeking ultimate performance, understanding and optimizing the refresh rate is crucial.

The standard refresh rate for traditional monitors is 60Hz, refreshing the screen 60 times per second. Although suitable for everyday tasks, this refresh rate cannot provide the smooth rendering required for high-speed gaming. Phenomena like motion blur or screen tearing directly affect gamers’ experiences.

To address these issues, gaming monitors with higher refresh rates—typically 120Hz, 144Hz, or even up to 240Hz—have entered the market. Such high refresh rates are expected to deliver smooth and ultra-fluid motion effects for gamers. At 120Hz, for example, the screen’s refresh rate is double that of a 60Hz monitor, significantly reducing motion blur.

However, to take advantage of these higher refresh rates, your graphics card must be capable of outputting corresponding frame rates. If the frame rate produced by the graphics processor is lower than the monitor’s refresh rate, its advantages will begin to diminish. Therefore, to optimize the gaming experience, the refresh rate must be synchronized with the corresponding or higher frame rate.

Technologies like NVIDIA’s G-SYNC and AMD’s FreeSync address this synchronization issue. These adaptive sync technologies help ensure that the frame rate output by the graphics card remains consistent with the monitor’s refresh rate, minimizing tearing and stuttering without the input lag associated with traditional V-Sync.

Investing in a high-refresh-rate monitor is especially worthwhile for performance-oriented competitive gamers. In fast-paced competitive environments, reaction time determines success or failure, and the difference between 60Hz and 144Hz could mean victory or defeat.

This isn’t a binary transition from “unplayable” to “perfect,” but rather a gradient where performance improvements can be felt as the refresh rate increases. In game genres requiring high speed and precision, such as first-person shooters, racing games, or any game with fast-moving visual effects, this difference is evident.

In conclusion, refresh rate is more than just a number—it’s about how technology continually redefines the gaming landscape, both in competitive and casual gaming spheres. Moving towards higher refresh rates represents gamers’ pursuit of the perfect visual journey, where every action and reaction on the screen is instantaneous, offering players a completely different overall experience.

Variable Refresh Rate Technology: Analyzing the Battle between G-Sync and FreeSync

In the pursuit of buttery-smooth gaming graphics, the competition between NVIDIA’s G-Sync and AMD’s FreeSync variable refresh rate (VRR) technologies is fierce. Before delving into a comprehensive analysis and comparison of these two groundbreaking technologies, let’s briefly introduce their advantages and differences.

Variable refresh rate technology has become the mainstream solution in the gaming monitor market, aiming to eliminate screen tearing and stuttering by synchronizing the monitor’s refresh rate with the frame rate output by the graphics processor (GPU). Among these technologies, NVIDIA®’s G-Sync and AMD’s FreeSync stand out as prominent solutions, offering gamers the opportunity to experience smooth motion and artifact-free visual effects.

NVIDIA®’s G-Sync requires hardware integration in the monitor in the form of a proprietary module. This ensures direct synchronization between the monitor and the GPU, resulting in a premium gaming experience. G-Sync monitors typically have a refresh rate ranging from 30Hz to the monitor’s maximum rate, ensuring consistent performance across various frame rates. Additionally, they often undergo rigorous certification processes, which elevate their quality but also contribute to higher price points.

On the other hand, as the name suggests, FreeSync is a more open, royalty-free approach developed by AMD, allowing monitor manufacturers to adopt it more widely, potentially making it a more cost-effective choice. FreeSync leverages the Adaptive-Sync standard in the DisplayPort specification, as well as recent HDMI standards, to keep the monitor’s refresh rate synchronized with the frame rate output by AMD GPUs.

Both technologies aim to reduce or eliminate screen tearing and minimize input lag, thereby enhancing the immersive gaming experience. Despite these shared goals, they have inherent differences in their implementation. The openness of FreeSync means that the quality and range of adaptive refreshment may vary significantly among different monitors. G-Sync’s strict hardware and certification requirements typically result in a better user experience.

The choice between G-Sync and FreeSync has historically been tied to the user’s choice of graphics card—users with NVIDIA GPUs tend to favor G-Sync, while those with AMD GPUs prefer FreeSync. However, with technological advancements, some G-Sync compatible monitors now support FreeSync, and vice versa, providing users with more options.

While G-Sync still holds advantages in wide refresh rate windows and performance guarantees, FreeSync offers greater affordability and ease of use, particularly evident in budget-conscious scenarios. Therefore, when balancing between the perfect performance of G-Sync and the multifunctional value of FreeSync, it’s important to consider individual needs and budget constraints.

In the realm of smooth visual gaming experiences, VRR technology marks a paradigm shift. The comparison between G-Sync and FreeSync is not just a competition but also a choice for gamers. Depending on budget limitations, brand loyalty, or specific gaming setup requirements, one may be favored by a wider range of gamers.

The Impact of Screen Types on Viewing Pleasure

A plethora of screen types inundates the contemporary visual landscape, each with unique attributes shaping different viewing experiences. Here’s a brief summary of the relationships between screen types and their effects on users.

In the digital world, screens serve as windows through which we consume media, information, and entertainment. The type of screen we gaze upon significantly influences our overall viewing experience, from color accuracy to eye fatigue. From LCD and LED to OLED and QLED, each technology brings unique visual effects.

Modern devices utilize various screen types, primarily LCD (Liquid Crystal Display) and OLED (Organic Light-Emitting Diode). LCD includes subtypes like IPS (In-Plane Switching) and TN (Twisted Nematic), illuminating pixels using a backlight and renowned for their widespread use and cost-effectiveness. However, they cannot produce true black like OLED since backlight tends to bleed through.

OLED technology stands out by allowing individual pixels to emit light independently, resulting in true blacks and incredibly high contrast—crucial for depth and immersive viewing. This independence yields vibrant, accurate colors and broader viewing angles compared to traditional backlight LCD screens.

QLED (Quantum Dot LED) is a variant of LED technology introduced by Samsung. Quantum dots enhance backlighting, providing brighter, more accurate colors than standard LEDs. However, due to its reliance on backlighting, it still cannot match OLED’s ability to produce perfect blacks.

These differences have a tremendous impact on the viewing experience. Professionals in fields like photography, video editing, and graphic design value the color reproduction and viewing angle accuracy of IPS LCD and OLED screens. Gamers may prefer TN LCD for its faster response time despite poorer color accuracy and viewing angles. Movie enthusiasts and everyday users typically opt for OLED due to its unmatched contrast and vibrancy.

In addition to visual fidelity, screen types also affect user comfort. Prolonged screen exposure, especially to screens with inaccurate color temperatures or harsh backlighting, can lead to eye fatigue. Technologies like blue light filters and flicker-free backlight aim to counter these potential adverse effects, providing a more comfortable long-term viewing experience.

Screen size also plays a crucial role—larger screens can offer a better experience, but depending on resolution and pixel density, they may not always provide clearer images. Smaller screens sacrifice immersion for portability, but recent technological advancements allow small displays to offer intricate details.

As people’s daily lives increasingly revolve around screens, choosing a screen type is not merely a matter of preference; it also affects how we perceive and interact with content. Whether prioritizing color accuracy, contrast, or eye comfort, there are plenty of choices available.

The Essence of HDR Gaming: Exploring the Actual Enhanced Visual Effects

HDR format encompasses a wider color gamut and contrast simulating real-world lighting, fundamentally transforming gamers’ visual experience. This section primarily delves into the practical significance of HDR in gaming and its resulting impact.

High Dynamic Range (HDR) has revolutionized the gaming industry, ushering in an era where subtle variations in brightness and darkness immerse players as much as the games themselves. While the term “HDR” is not unfamiliar to photographers and cinephiles, its emergence in gaming is a recent phenomenon—a visual feast in the digital age.

HDR in gaming is not just another acronym but a revolution in game rendering and display. Traditional Standard Dynamic Range (SDR) games constrain brightness and darkness levels, leading to overlooked details in overly dark shadows or washed-out highlights. HDR widens this range, encompassing a broader spectrum of brightness and finer details in the shadows, as well as richer colors.

Different HDR formats like HDR10, Dolby Vision, and HLG offer varying degrees of detail and color fidelity. HDR10 is a widely supported open standard in the industry, while Dolby Vision goes further by providing dynamic metadata, allowing adjustments on a scene-by-scene basis. HLG (Hybrid Log-Gamma) primarily targets broadcast content, but more and more games are also embracing this technology.

The impact on gamers is evident. In HDR-supported games, players can discern subtle differences hidden within the environment. With players now facing realistic shadows and light sources, stealth gameplay undergoes revolutionary changes. Racing games boast more lifelike reflections and sunlight effects, adding realism to speed.

However, the leap towards HDR is not without its challenges. To fully experience the advantages of HDR, a compatible display is required—HDR-supported TVs or monitors, along with gaming consoles or PCs that support HDR output. Additionally, there are content issues, as not all games consider HDR during development. Furthermore, its setup is complex, requiring players to calibrate their displays to achieve the most accurate visual effects.

Despite these hurdles, the actual impact on gaming is evident. HDR enhances the depth and believability of the virtual world, coming closer to mimicking the dynamic range our eyes perceive in natural vision. It heightens emotional involvement and overall immersion, qualities at the core of modern gaming.

The emergence of HDR in gaming is not just a trend but a commitment to authenticity and sensory depth. For gamers interested in subtle differences in lighting and color or those simply seeking a more realistic visual experience, HDR is the gateway to unparalleled gaming experiences. While the journey into HDR may not always be seamless, it is vivid and valuable for those eager to explore HDR beauty.

Synchronized Dance: Matching Refresh Rates and Graphics Cards

Achieving harmony between the output of your graphics card and the refresh rate of your monitor is key to a seamless visual experience. This section will delve into the required synergy and how it affects gameplay and graphics fidelity.

In the dynamic world of computer graphics, the complexity of delivering a smooth visual experience lies in the delicate balance between graphics card performance and monitor performance. The refresh rate of the monitor (the number of times the screen is redrawn per second) must be synchronized with the frame rate (frames per second, FPS) output by the graphics card in order to achieve an optimal display without screen tearing or lagging.

Graphics cards are at the heart of rendering images on the screen. They calculate the frame data and transmit it to the monitor. When the FPS exceeds the refresh rate of the monitor, it causes screen tearing, which means that multiple frames are displayed at the same time. Conversely, if the FPS is too low, the screen stutters as it waits for the next frame to arrive.

Synchronization solutions are provided by technologies such as V-Sync, G-Sync, and FreeSync, which cap the FPS at the maximum refresh rate of the monitor to prevent screen tearing, but introduce input delays. G-Sync (NVIDIA) and FreeSync (AMD) offer adaptive refresh rates, with the monitor adjusting the refresh rate in real-time to match the output FPS of the graphics card, eliminating tearing, reducing stutter, and minimizing input lag.

The benefits of these matched refresh rates for gaming are profound, as every millisecond impacts game performance. Fast-paced games especially benefit from higher refresh rates and the right pairing with graphics cards that can handle those refresh rates, resulting in less motion blur and a smoother, more responsive experience.

Additionally, higher refresh rates aren’t just for competitive gamers. Even in general use, they provide a more comfortable and less fatiguing viewing experience. As graphics cards continue to advance to produce more frames per second, the demand for monitors that match those outputs will increase every day.

To truly harness the potential of modern graphics cards, it’s crucial to ensure that monitors not only support high refresh rates but also synchronize effectively. Matching GPU performance with compatible displays isn’t just a technical challenge; it’s also about delivering a high-quality experience.

Striking the Ideal Balance Between Resolution and Refresh Rate

Understanding the interplay between resolution and refresh rate is crucial in customizing visual hardware to meet specific individual needs. This section will dissect the quest for a compromise between these two critical factors.

In a world teeming with various technological choices, discerning the optimal balance between screen resolution and refresh rate is paramount for achieving the best visual experience. Resolution determines the level of detail a screen can display, while refresh rate dictates the smoothness of motion images. Undoubtedly, higher resolution means clearer images, while a higher refresh rate provides smoother transitions. However, striking the right balance is vital for any digital experience, especially in gaming and professional visual applications.

For gamers, the crux lies in balancing the crisp visual effects of high resolutions like 4K with the smooth operation offered by high refresh rates (typically 144Hz or above). Competitive gamers requiring swift response times may prioritize higher refresh rates over resolution to gain an edge in reaction time without experiencing display lag. Conversely, content-oriented casual gamers may lean towards higher resolution for a clearer visual experience.

When it comes to professional tasks like video editing or graphic design, resolution tends to take precedence to ensure accuracy in work. Nevertheless, a moderate refresh rate remains crucial for maintaining fluidity in preview and editing interfaces.

Now, it’s not just a matter of choice but also one of compatibility. Monitors capable of both high resolution and high refresh rates can be costly and demanding on hardware. Higher resolutions demand more powerful graphics cards to maintain adequate frame rates, which must align with the monitor’s refresh rate to avoid screen tearing or jittering.

In recent years, technology has been advancing rapidly, pushing the boundaries of what hardware can achieve. Monitor manufacturers strive to combine high resolutions with even higher refresh rates, while advancements in graphics cards make achieving these lofty rendering standards easier.

Finding the optimal balance between resolution and refresh rate entails a comprehensive consideration of personal preferences, cost considerations, and hardware capabilities. Whether one values the stunning effects of high-definition imagery or the seamless operation of high-speed motion, ensuring that hardware choices reflect individual needs is crucial. With technology advancing at a rapid pace, I believe there is ample opportunity to customize digital experiences to unprecedented levels of precision and enjoyment in the future.

Prioritizing Visual Health: Advances in Monitor Eye Comfort Features

This summary emphasizes the importance of eye comfort features in modern monitors, which enhance user experience beyond mere specifications. Let’s delve into how these advanced features cater to eye health needs.

In this era of rapid technological advancement, daily screen usage has become ubiquitous, whether it’s the large fluorescent screens on the streets, display screens at stations, checkout counters, office computers, or smartphone screens. Therefore, emphasizing the value of eye comfort features in monitors cannot be overstated. Apart from the basic specifications most buyers consider, such as resolution, refresh rate, and size, these features play a crucial role in user health and long-term comfort during extended usage.

Eye comfort technologies address several factors that contribute to eye fatigue, including blue light radiation, flickering, contrast levels, and even the physical design of the monitor itself. Blue light, a high-energy visible light, affects sleep patterns and causes eye discomfort. Monitors equipped with low blue light filters can minimize these adverse effects without significantly altering color accuracy.

Screen flickering is often imperceptible but can also lead to eye fatigue and headaches. Flicker-free technology mitigates this issue by maintaining a constant light source, particularly useful in dimly lit environments. Adaptive brightness is another feature that adjusts the monitor’s brightness based on ambient light conditions, reducing eye strain in varying lighting environments.

Furthermore, the debate between flat and curved screens in monitor construction continues. Curved monitors claim to offer a more natural viewing angle, cover a wider field of view, reduce the need to move the eyes on the flat surface, and potentially lower the risk of eye fatigue.

Obtaining eye comfort certifications from reputable institutions means that a monitor has passed rigorous testing to ensure it meets specific standards for minimizing eye fatigue. These certifications establish consumer trust in monitors, indicating that they are suitable for long-term use.

Monitor manufacturers are increasingly investing in eye comfort technology to address consumers’ growing concerns about health. These advancements reflect an understanding that visual devices are long-term companions in our digital lives. As consumers become more health-conscious, prioritizing eye comfort when purchasing technology products becomes essential. Deepening innovation in this area is not only a trend but also a necessary requirement for a digitally sustainable lifestyle. Therefore, monitors with these eye protection features not only perform visual tasks better but also improve sleep, enhance work efficiency, and promote overall health.

Conclusion and Final Recommendations

In summary, if you’re a gamer, opt for a 144hz or 165hz monitor with 2K or 1080P resolution and 99% or 100% sRGB color. If you’re a designer with high color requirements, a 60hz monitor with good color accuracy is a better choice.

Remember, while we often say “you get what you pay for”, the cost of some products isn’t as exaggerated as you might think. For instance, the cost of 4K monitors has significantly decreased in recent years. So, don’t be too quick to dismiss affordable options.